AI新闻网:https://www.marktechpost.com/

算法核心基础与AI模型设计【我的CSDN技术博客】:https://blog.csdn.net/weixin_41194129/category_11362509.html

AI算法学习社区: https://github.com/Algorithm-learning-community-for-python

YOLO系列资料汇总:https://github.com/KangChou/Cver4s

NVIDIA-CUDA编程:https://github.com/KangChou/deepcv_project_demo/tree/main/CUDA%E7%BC%96%E7%A8%8B

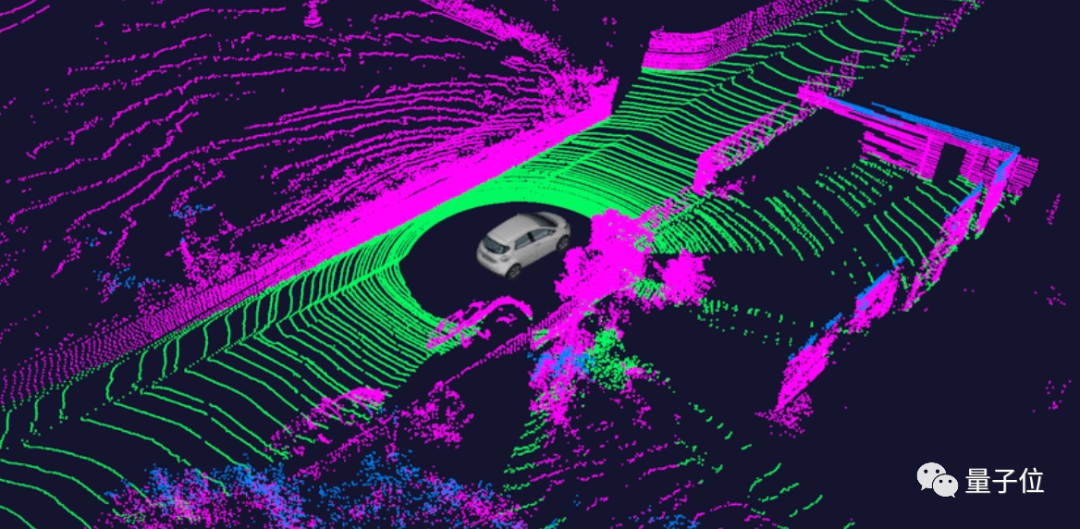

自动驾驶点云技术: https://github.com/KangChou/deepcv_project_demo/tree/main/CVPR/point-cloud

计算机视觉技术: https://github.com/KangChou/deepcv_project_demo/tree/main/CVPR/visual

专业的聊天机器人: https://github.com/salesforce/Converse

基于开源GPT2.0的初代创作型人工智能 | 可扩展、可进化:https://github.com/EssayKillerBrain/EssayKiller_V2

高质量中文预训练模型集合:https://github.com/CLUEbenchmark/CLUEPretrainedModels

自然语言基础模型:https://github.com/lpty/nlp_base

BERT模型从训练到部署全流程:https://github.com/xmxoxo/BERT-train2deploy

中文BERT-wwm系列模型:https://github.com/ymcui/Chinese-BERT-wwm

深度学习入门教程, 优秀文章: https://github.com/Mikoto10032/DeepLearning

3D视觉、VSLAM、计算机视觉的干货资料: https://github.com/qxiaofan/awesome_3d_slam_resources

自动驾驶系统实现:https://github.com/sunmiaozju/smartcar

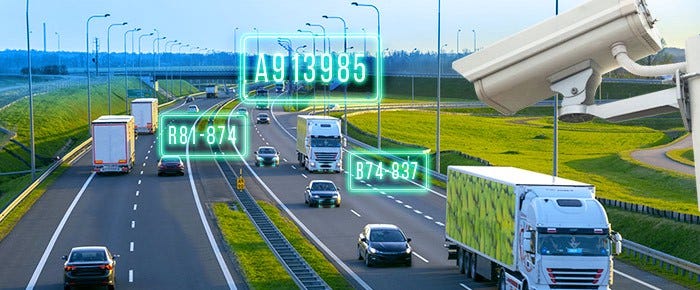

身份证自动识别,银行卡识别,驾驶证识别,行驶证识别:https://github.com/wenchaosong/OCR_identify

MVision 机器视觉 机器视觉:https://github.com/Ewenwan/MVision

Computer Vision: Algorithms and Applications:https://szeliski.org/Book/

自动驾驶的激光雷达点云处理: https://github.com/beedotkiran/Lidar_For_AD_references

动态语义SLAM 目标检测+VSLAM+光流/多视角几何动态物体检测+octomap地图+目标数据库:https://github.com/Ewenwan/ORB_SLAM2_SSD_Semantic

基于视频的目标检测算法研究:https://github.com/guanfuchen/video_obj

TensorRT-7 Network: https://github.com/Syencil/tensorRT

C++ TensorRT-CenterNet: https://github.com/CaoWGG/TensorRT-CenterNet

yolox-deepsort:https://github.com/Sharpiless/yolox-deepsort

BirdNet+:LiDAR 鸟瞰图中的端到端 3D 对象检测:https://github.com/AlejandroBarrera/birdnet2

关于nuScenes 数据集的开发套件:https://github.com/nutonomy/nuscenes-devkit

A robust LiDAR Odometry and Mapping (LOAM) package for Livox-LiDAR:https://github.com/hku-mars/loam_livox

激光雷达论文:https://arxiv.org/search/?query=+LiDAR&searchtype=all&source=header

使用CUDA PCL 加速Jetson的点云处理:https://developer.nvidia.com/zh-cn/blog/cuda-pcl-1-0-jetson/

PCT: Point Cloud Transformer: https://github.com/MenghaoGuo/PCT